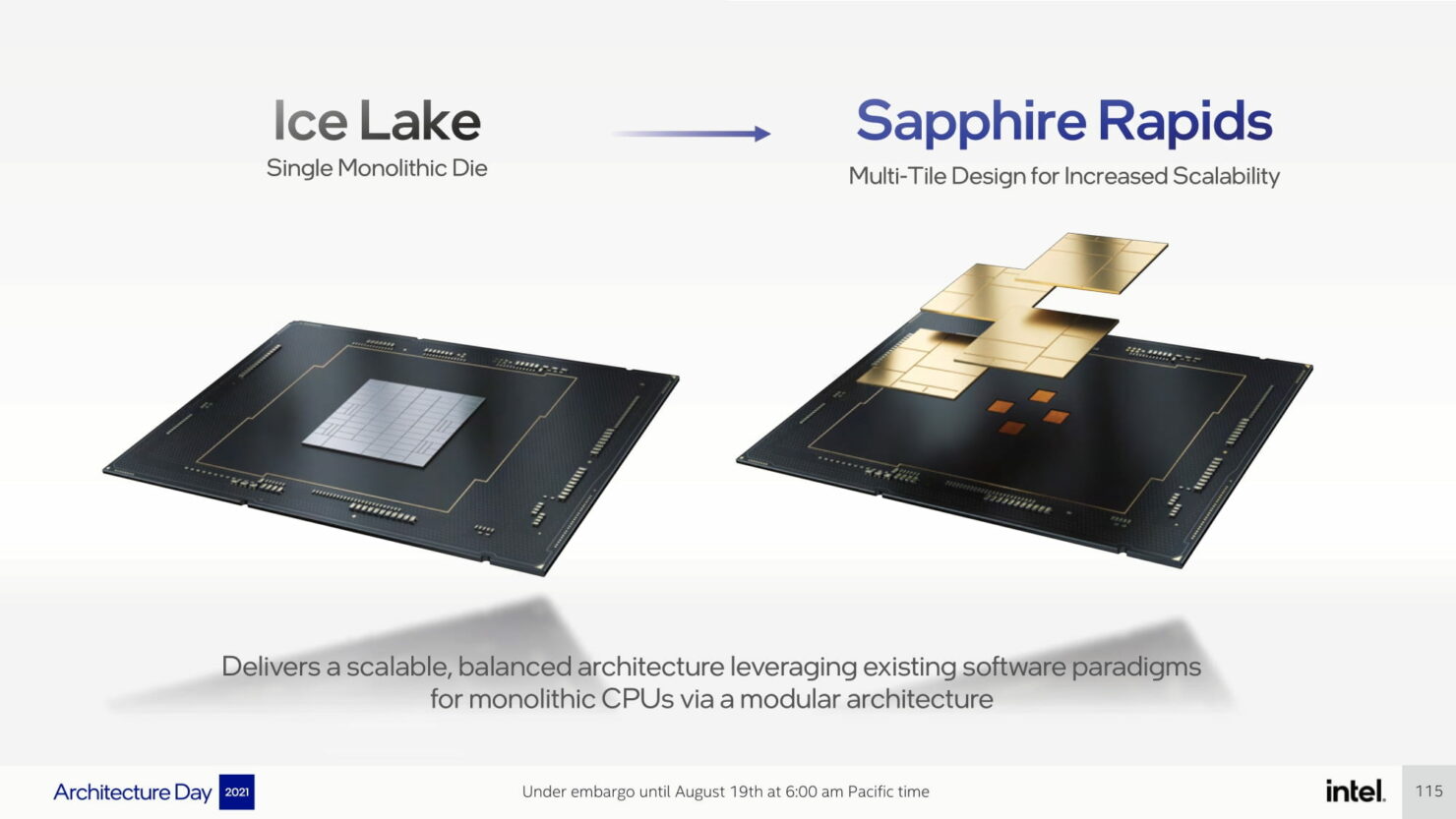

Intel has formally detailed its next-generation Sapphire Rapids-SP CPU lineup which shall be a part of the 4th Gen Xeon Scalable household. The Intel Sapphire Rapids-SP lineup will encompass a spread of latest applied sciences with crucial being the seamless integration of a number of chiplets or ‘Tiles’, as Intel refers to them, by way of their EMIB expertise.

Intel Absolutely Particulars Subsequent-Gen Sapphire Rapids-SP Xeon CPUs, Multi-Tile Chiplet Design Primarily based on ‘Intel 7’ Course of Node

The Sapphire Rapids-SP household shall be changing the Ice Lake-SP household and can go all on board with the ‘Intel 7’ course of node (previously 10nm Enhanced SuperFin) that shall be making its formal debut later this 12 months within the Alder Lake client household. The server lineup will characteristic the performance-optimized Golden Cove core structure which delivers a 20% IPC enchancment over Willow Cove core structure. A number of cores are featured on a number of tiles and packaged collectively by way of using EMIB.

For Sapphire Rapids-SP, Intel is utilizing a quad multi-tile chiplet design which can are available HBM and non-HBM flavors. Whereas every tile is its personal unit, the chip itself acts as one singular SOC and every thread has full entry to all assets on all tiles, constantly offering low-latency & excessive cross-section bandwidth throughout all the SOC. Every tile is additional composed of three primary IP blocks & that are detailed under:

Compute IP

- Cores

- Acceleration Engines

I/O IP

- CXL 1.1

- PCIe Gen 5

- UPI 2.0

Reminiscence IP

We’ve already taken an in-depth take a look at the P-Core over right here however among the key modifications that shall be supplied to the info middle platform will embrace AMX, AiA, FP16, and CLDEMOTE capabilities. The Accelerator Engines will improve the effectiveness of every core by offloading common-mode duties to those devoted accelerator engines which can improve efficiency & lower the time taken to attain the mandatory activity.

When it comes to I/O developments, Sapphire Rapids-SP Xeon CPUs will introduce CXL 1.1 for accelerator and reminiscence growth within the knowledge middle section. There’s additionally an improved multi-socket scaling through Intel UPI, delivering as much as 4 x24 UPI hyperlinks at 16 GT/s and a brand new 8S-4UPI performance-optimized topology. The brand new tile structure design additionally boosts the cache past 100 MB together with Optane Persistent Reminiscence 300 collection assist.

Intel has additionally detailed its Sapphire Rapids-SP Xeon CPUs with HBM reminiscence. From what Intel has proven, their Xeon CPUs will home as much as 4 HBM packages, all providing considerably greater DRAM bandwidth versus a baseline Sapphire Rapids-SP Xeon CPU with 8-channel DDR5 reminiscence. That is going to permit Intel to supply a chip with each elevated capability and bandwidth for purchasers that demand it. The HBM SKUs can be utilized in two modes, an HBM Flat mode & an HBM caching mode.

Intel additionally confirmed a demo of their Sapphire Rapids-SP Xeon CPUs operating an inside GEMM Kernel with and with out AMX directions. The AMX enabled resolution delivered a 7.8x enchancment over the non-AMX resolution. This demo was additionally from early silicon so last efficiency might additional enhance. Intel did not disclose any further particulars concerning the check platform.

Intel Sapphire Rapids-SP Xeon CPU Platform

The Sapphire Rapids lineup will make use of 8 channel DDR5 reminiscence with speeds of as much as 4800 Mbps & assist PCIe Gen 5.0 on the Eagle Stream platform. The Eagle Stream platform may even introduce the LGA 4677 socket which shall be changing the LGA 4189 socket for Intel’s upcoming Cedar Island & Whitley platform which might home Cooper Lake-SP and Ice Lake-SP processors, respectively. The Intel Sapphire Rapids-SP Xeon CPUs may even include CXL 1.1 interconnect that can mark an enormous milestone for the blue group within the server section.

Coming to the configurations, the highest half is began to characteristic 56 cores with a TDP of 350W. What’s fascinating about this configuration is that it’s listed as a low-bin break up variant which signifies that will probably be utilizing a tile or MCM design. The Sapphire Rapids-SP Xeon CPU shall be composed of a 4-tile structure with every tile that includes 14 cores every.

Following are the leaked configurations:

- Sapphire Rapids-SP 24 Core / 48 Thread / 45.0 MB / 225W

- Sapphire Rapids-SP 28 Core / 56 Thread / 52.5 MB / 250W

- Sapphire Rapids-SP 40 Core / 48 Thread / 75.0 MB / 300W

- Sapphire Rapids-SP 44 Core / 88 Thread / 82.5 MB / 270W

- Sapphire Rapids-SP 48 Core / 96 Thread / 90.0 MB / 350W

- Sapphire Rapids-SP 56 Core / 112 Thread / 105 MB / 350W

It appears like AMD will nonetheless maintain the higher hand within the variety of cores & threads supplied per CPU with their Genoa chips pushing for as much as 96 cores whereas Intel Xeon chips would max out at 56 cores if they do not plan on making SKUs with the next variety of tiles. Intel can have a wider and extra expandable platform that may assist as much as 8 CPUs without delay so except Genoa affords greater than 2P (dual-socket) configurations, Intel can have the lead in probably the most variety of cores per rack with an 8S rack packing as much as 448 cores and 896 threads.

The Intel Saphhire Rapids CPUs will comprise 4 HBM2 stacks with a most reminiscence of 64 GB (16GB every). The presence of reminiscence so close to to the die would do absolute wonders for sure workloads that require enormous knowledge units and can principally act as an L4 cache.

AMD has been taking away fairly a number of wins from Intel as seen within the current Top500 charts from ISC ’21. Intel would actually must up their sport within the subsequent couple of years to combat again the AMD EPYC risk. Intel is predicted to launch Sapphire Rapids-SP in 2023 adopted by HBM variants which are anticipated to launch round 2023.

Intel Xeon SP Households:

| Household Branding | Skylake-SP | Cascade Lake-SP/AP | Cooper Lake-SP | Ice Lake-SP | Sapphire Rapids | Emerald Rapids | Granite Rapids | Diamond Rapids |

|---|---|---|---|---|---|---|---|---|

| Course of Node | 14nm+ | 14nm++ | 14nm++ | 10nm+ | Intel 7 | Intel 7 | Intel 4 | Intel 3? |

| Platform Title | Intel Purley | Intel Purley | Intel Cedar Island | Intel Whitley | Intel Eagle Stream | Intel Eagle Stream | Intel Mountain Stream Intel Birch Stream | Intel Mountain Stream Intel Birch Stream |

| MCP (Multi-Chip Package deal) SKUs | No | Sure | No | No | Sure | TBD | TBD (Probably Sure) | TBD (Probably Sure) |

| Socket | LGA 3647 | LGA 3647 | LGA 4189 | LGA 4189 | LGA 4677 | LGA 4677 | LGA 4677 | TBD |

| Max Core Depend | Up To twenty-eight | Up To twenty-eight | Up To twenty-eight | Up To 40 | Up To 56 | TBD | Up To 120? | TBD |

| Max Thread Depend | Up To 56 | Up To 56 | Up To 56 | Up To 80 | Up To 112 | TBD | Up To 240? | TBD |

| Max L3 Cache | 38.5 MB L3 | 38.5 MB L3 | 38.5 MB L3 | 60 MB L3 | 105 MB L3 | TBD | TBD | TBD |

| Reminiscence Help | DDR4-2666 6-Channel | DDR4-2933 6-Channel | Up To six-Channel DDR4-3200 | Up To eight-Channel DDR4-3200 | Up To eight-Channel DDR5-4800 | Up To eight-Channel DDR5-5200? | TBD | TBD |

| PCIe Gen Help | PCIe 3.0 (48 Lanes) | PCIe 3.0 (48 Lanes) | PCIe 3.0 (48 Lanes) | PCIe 4.0 (64 Lanes) | PCIe 5.0 (80 lanes) | PCIe 5.0 | PCIe 6.0? | PCIe 6.0? |

| TDP Vary | 140W-205W | 165W-205W | 150W-250W | 105-270W | Up To 350W | TBD | TBD | TBD |

| 3D Xpoint Optane DIMM | N/A | Apache Move | Barlow Move | Barlow Move | Crow Move | Crow Move? | Donahue Move? | Donahue Move? |

| Competitors | AMD EPYC Naples 14nm | AMD EPYC Rome 7nm | AMD EPYC Rome 7nm | AMD EPYC Milan 7nm+ | AMD EPYC Genoa ~5nm | AMD Subsequent-Gen EPYC (Publish Genoa) | AMD Subsequent-Gen EPYC (Publish Genoa) | AMD Subsequent-Gen EPYC (Publish Genoa) |

| Launch | 2017 | 2018 | 2020 | 2021 | 2023 | 2023? | 2023? | 2024? |